Abstract Factory in cross-platform testing of native mobile apps

Manual testing is time-consuming, and with automation, some tests work better for Android and some for iOS. How can you streamline your work and enhance native mobile apps' testing reliability? The answer is - programming design patterns.

Mobile apps are often designed and developed for both Android and iOS platforms at the same time. They can be developed as native, web, or hybrid apps.

Native apps are built for specific operating systems (iOS or Android), web apps for mobile web browsers, and hybrid apps have characteristics of both native and web apps.

In this blog, we are gonna be talking about native apps. The same mobile app design implemented for different operating systems results in two similar but different apps. Those apps may look the same and behave the same but are developed separately with different frameworks and technologies for different types of devices and for different operating systems.

Challenges with native app testing

One of the problems that arises with the development of these apps is testing them.

We can test them manually, but that comes with a bunch of problems. First of all, you have two apps, so you have to execute each test twice. With more features being added, regression testing becomes more difficult, more time-consuming, and, with that, more expensive.

A great idea is to automate the tests. Appium offers almost the same interface for both Android and iOS. This means that one test code should work for both apps - that is if we don't take locators and capabilities into consideration since we can easily solve that problem with a couple of if-else statements in our code. But in actuality, things don’t work out like that. Since those are actually two different apps that just follow the same design, the test code doesn’t always work as we want it to. The tests often come out flaky. Some tests work better for Android, and some work better for iOS. Those tests are, therefore, unreliable.

How can we solve this?

One solution that drastically helps with this problem comes in the form of programming design patterns. Programming design patterns are pieces of reusable solutions to common problems in software design, development, and architecture.

There are always pros and cons to each one. Each one solves a problem but introduces some other problems as well. If the pros outweigh the cons for you, consider implementing a programming design pattern.

I have come up with a way to implement an Abstract Factory design pattern for Screen Object (instead of Page Object, I will be using Screen Object phrase because it better fits the context) management with Appium. We will go over how to implement it to reduce test flakiness and increase test reliability.

Abstract Factory

Abstract Factory is a creational design pattern. It is one of the Gang of Four design patterns. The intended use of this pattern is as it says in “Design Patterns: Elements of Reusable Object-Oriented Software” (a book by four outstanding designers: Erich Gamma, Richard Helm, Ralph Johnson, and John Vlissides) is to

“Provide an interface for creating families of related or dependent objects without specifying their concrete classes”

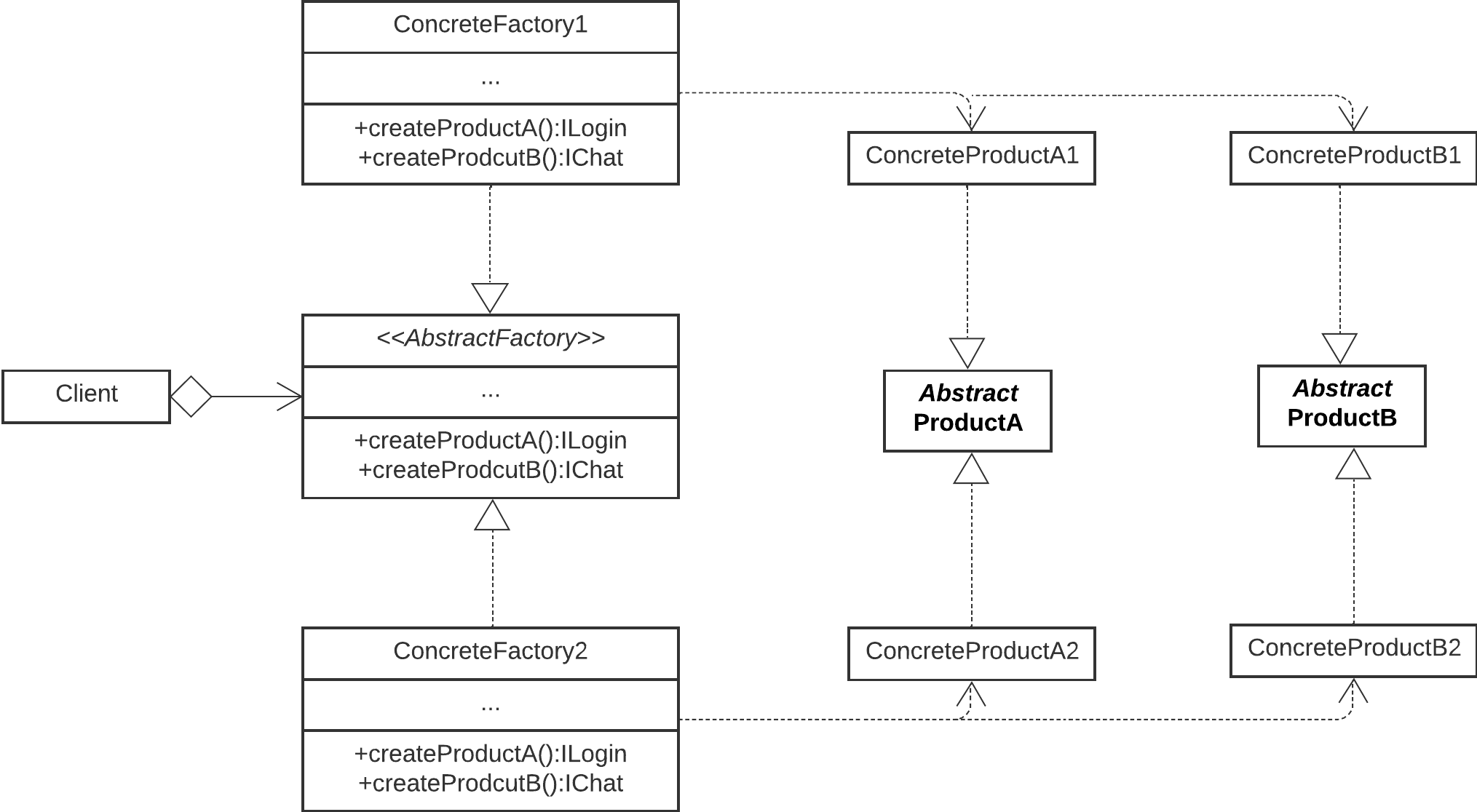

A diagram of a generic Abstract Factory

How does Abstract Factory solve the problem?

So, how do we apply this to our problem? First, we have to go over the participants in the Abstract factory:

- Abstract Factory: Interface for creating product objects

- Concrete Factory: Implementation of Abstract Factory

- Abstract Product: Interface for a Product

- Concrete Product: Implementation of an Abstract Product

- Client: Uses only Abstract Factory

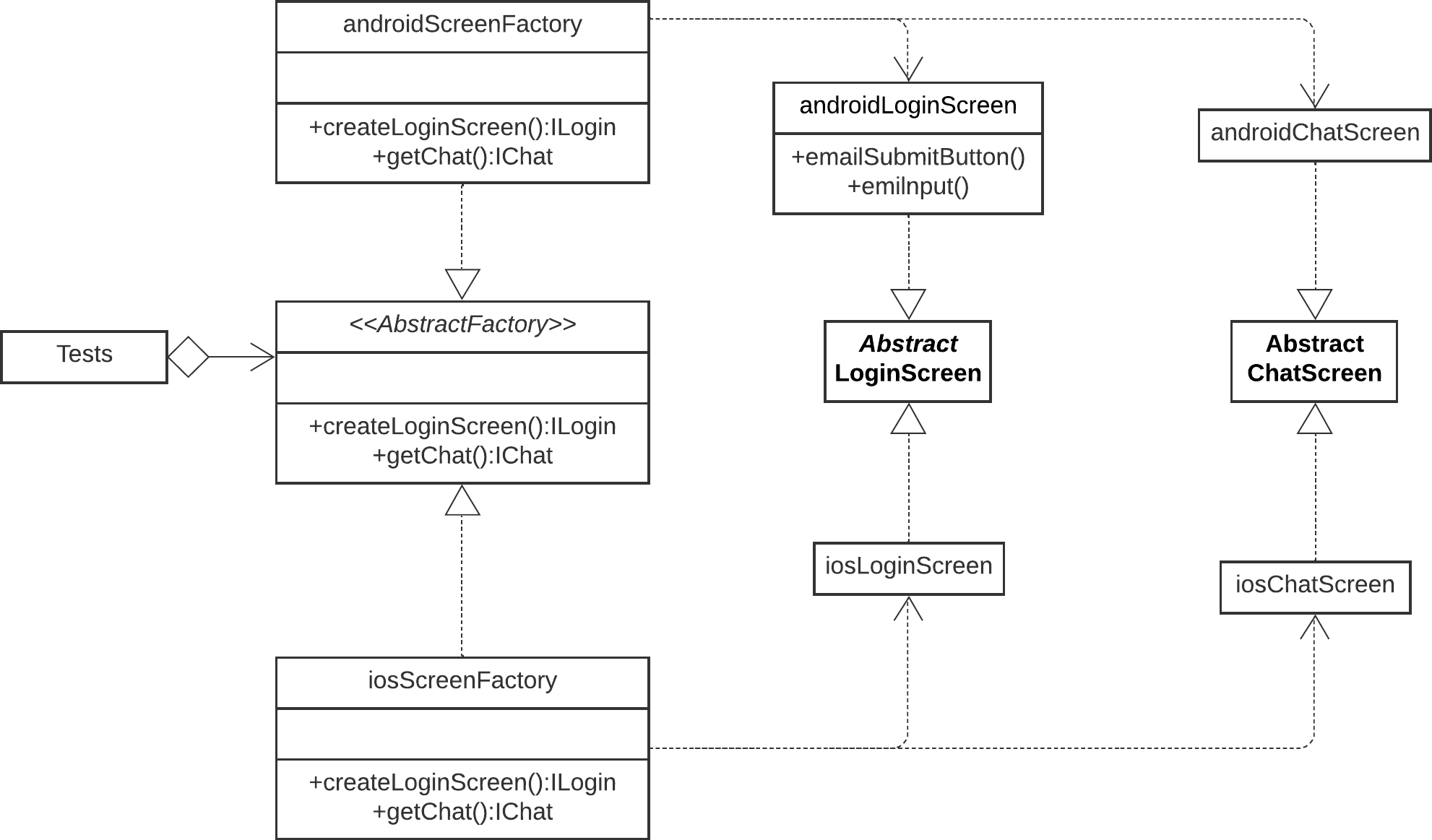

A diagram of our Abstract factory

When we map the Abstract Factory pattern to our problem, our actors become

- Abstract Factory -> Abstract Screen Factory: Interface for creating Screen Objects

- Concrete Factory -> Concrete Screen Factory: Implementation of Abstract Screen Factory

- Abstract Product -> Abstract Screen Object: Interface for a Screen Object

- Concrete product -> Screen Object: Implementation of an Abstract Screen Object

- Client -> Tests: Uses only Abstract Screen Factory

Abstract Screen Factory defines functions that return Abstract Screen Objects; Concrete Screen Factory implements the Abstract Screen Factory and returns Screen Objects; Abstract Screen Objects contain declarations of all locators, helper functions, and text content that we will need for that specific screen; Screen Object implements the Abstract Screen Object. In our case, the Tests are the users of our Screen Objects. There will be two of every Screen Object and two Concrete Screen Factories each for one operating system.

After all of this is completed, we need to find a place in the code where we will be controlling what type of factory is instantiated based on a condition that we will probably pull from the environment file or similar. The last thing to do is to use the Abstract Factory for the creation of screen objects in our test code.

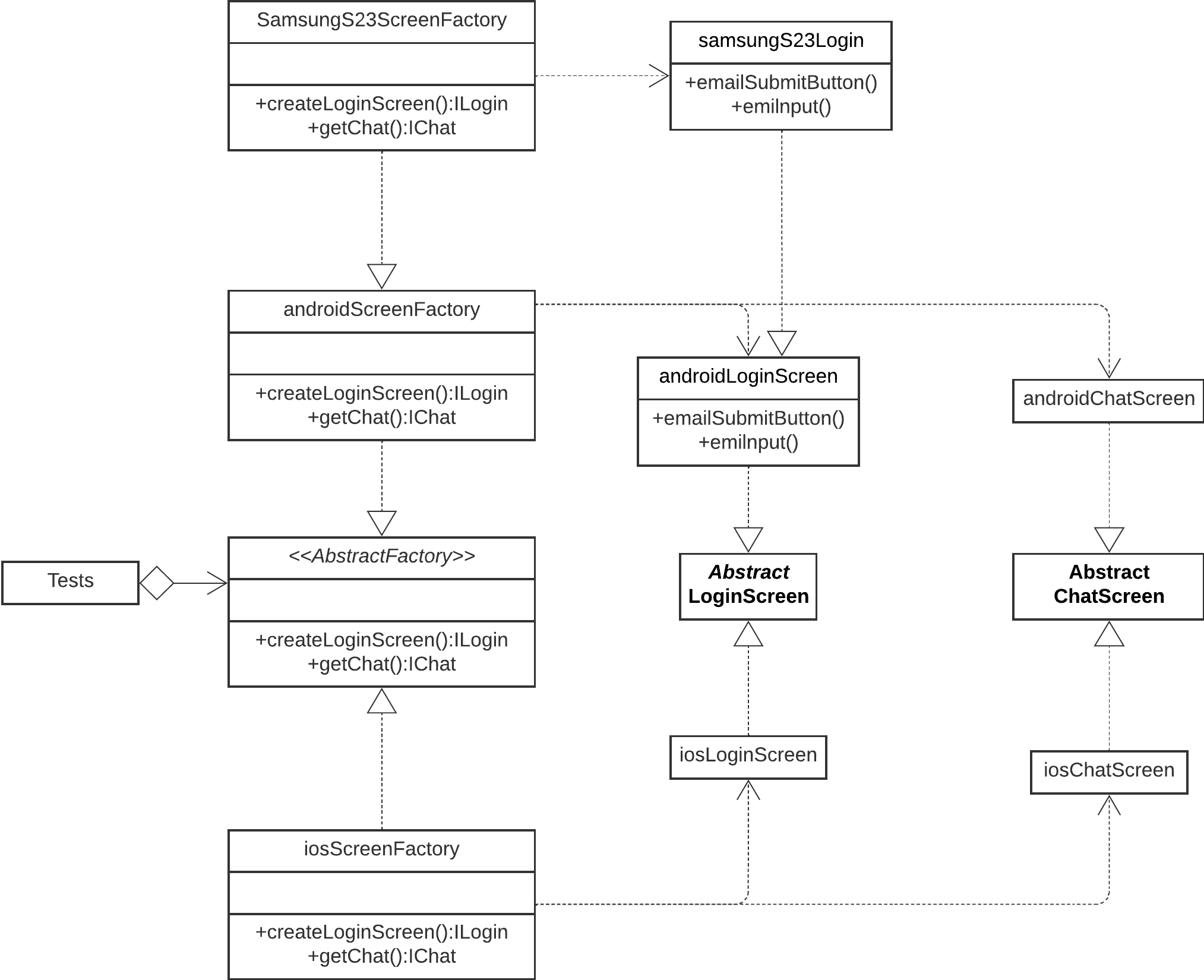

Problems with different simulators, emulators, and devices

One more problem that we may run into involves iOS and Android simulators, emulators, and devices, depending on how we run our tests. Test code that works perfectly for one device may not work for another. This is not because the app doesn't work on that device or because the tests are flaky but because that specific device slightly differs from others, and some tests fail when using it. This can happen because of one locator, helper function, or client API function that doesn't work for that specific device, although it did for all others.

- In that case, we can extend a new Concrete Factory for that specific device from an existing Concrete Factory.

- We also need to extend new Screen Objects out of those that we have issues with.

- Then, we need to override functions that cause problems and define implementation specific to that device.

- Lastly, we need to reference those new screen objects in the newly extended factory.

We have now upgraded our test code to work for that specific device without changing what works for others.

In the image above, we see a diagram of an extended Abstract Factory.

Code example

Below, you can see an Appium WebdriverIO’s example of how to structure the Screen Objects with Abstract Factory:

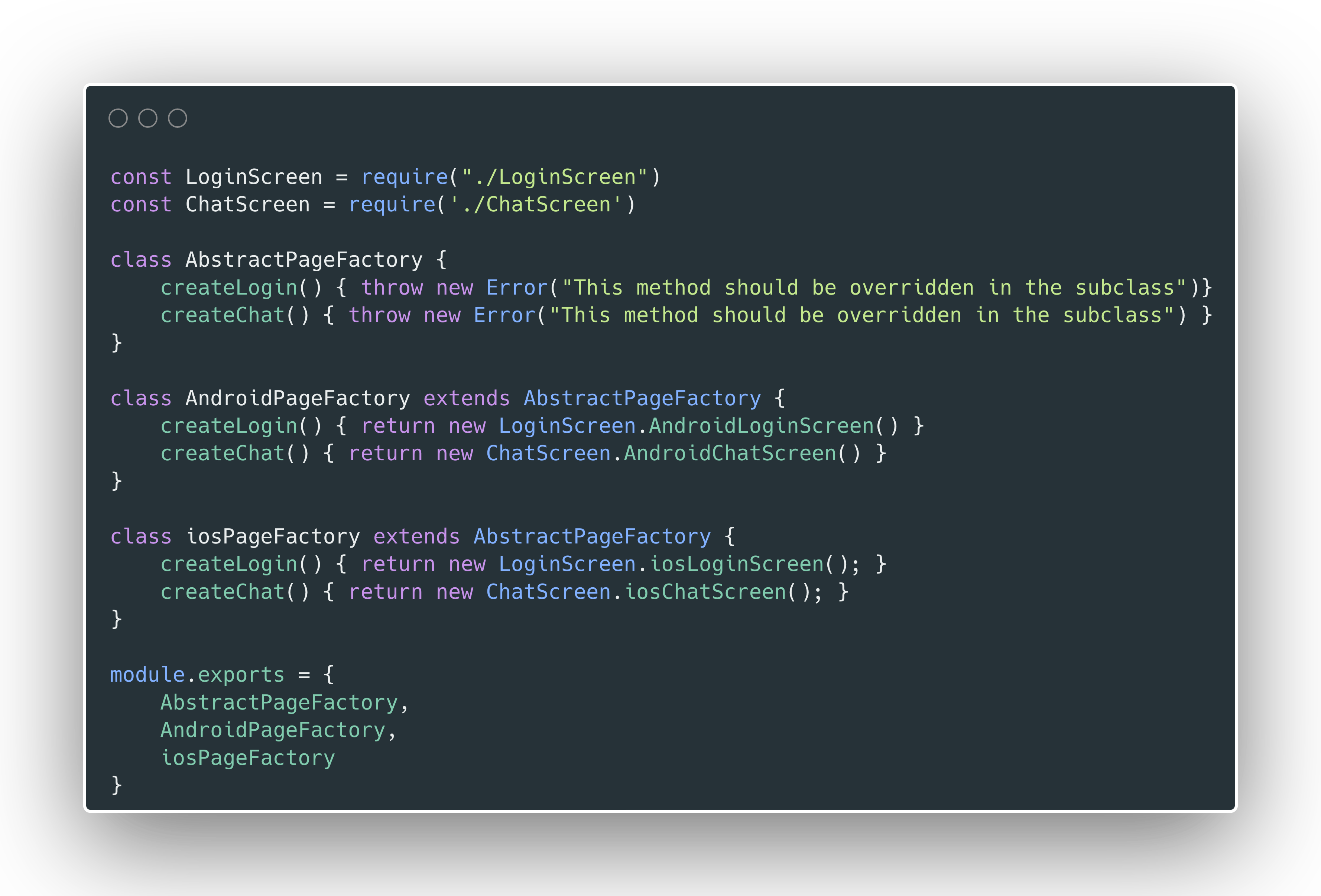

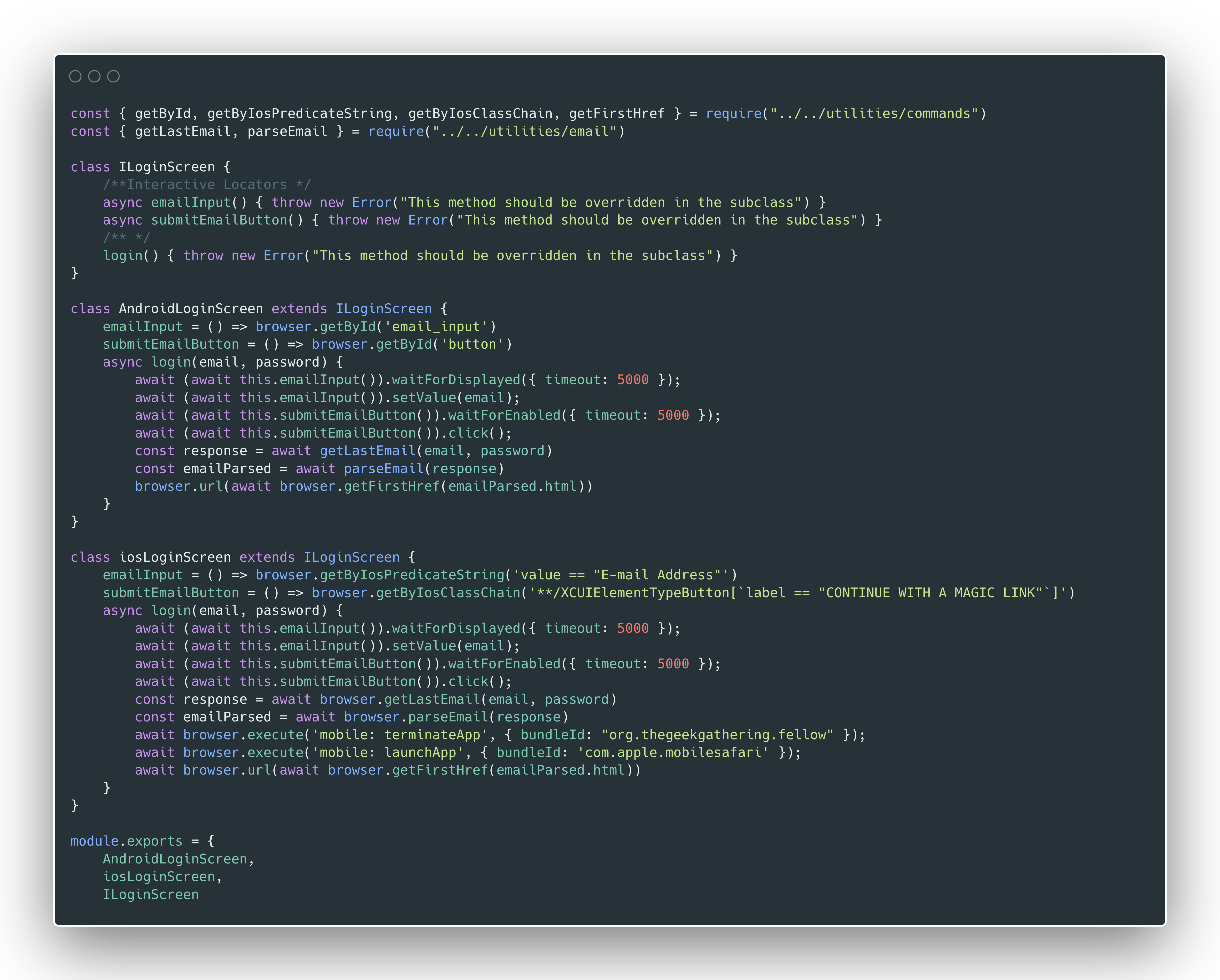

Abstract Factory and Concrete implementation, all in the same file

In the image above, we use Abstract Screen and Concrete screen implementations.

We can notice that login functions work differently. In this kind of situation, our abstract factory implementation shines through.

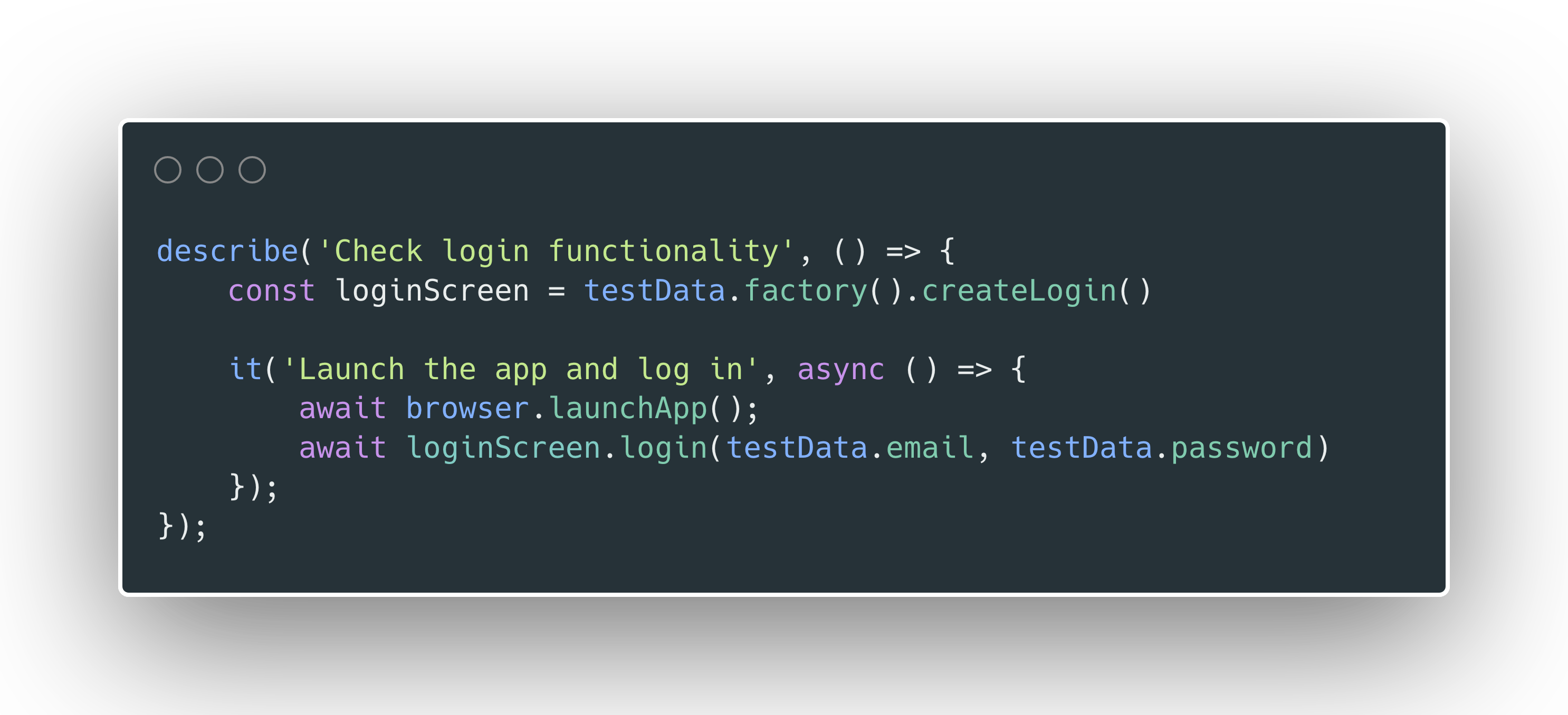

The usage of screen objects through the Abstract Factory

Pros and cons

Pros of this kind of solution are that one test code runs more reliably for different devices and operating systems. By allowing us to define different behaviors for each operating system and/or device, it solves a bunch of annoying problems. It also removes the need for the usage of if-else statements in our test code.

Cons come in the form of increased complexity of our code. A skillful programmer shouldn’t have many problems dealing with some more complexity. In my opinion, that complexity is acceptable if it makes tests more reliable.

Part of that increased complexity comes in the form of more files than before, making it easy to get lost. The file amount triples with this approach. One thing that helped me with this is defining all classes and interfaces related to a specific screen in one file. Screen files written like this didn’t come out excessively huge, and you can’t fit that much stuff on a mobile screen anyway. In my case, the files were between 200 and 350 lines, which is acceptable by my standards.

Is this the best solution?

We have just mentioned the pros and cons, so how good of a solution is depends on the specific automation, apps, developer, programming language, framework, environment, project complexity, and other factors.

It has worked well for me, and I encourage you to try it out. You may find it solves some of the problems you face regarding your test automation. It also stops you from having to develop two different test automation projects for native apps in your project.

Hey, you! What do you think?

They say knowledge has power only if you pass it on - we hope our blog post gave you valuable insight.

If you are in need of customer software that will be tested by dedicated professionals in their field or want to share your opinion, feel free to contact us.

We'd love to hear what you have to say!